Conjecture: how far can rollups + data shards scale in 2030? 14 million TPS!

This post is conjecture and extrapolation. Please treat it more as a fun thought experiment rather than serious research.

Rollups are bottlenecked by data availability. So, it’s all about how Ethereum scales up data availability. Of course, other bottlenecks come into play at some point: execution clients/VM at the rollup level, capacity for state root diffs and proofs on L1 etc. But those will continue to improve, so let’s assume data availability is always the bottleneck. So how do we improve data availability? With data shards, of course. But from there, there’s further room for expansion.

There are two elements to this:

1) Increasing the number of shards

2) Expanding DA per shard

1) is defined as fairly straight forward — 1,024 shards in the current specification. So, we can assume by 2030 we’re at 1,024 shards, given how well beacon chain has been adopted in such a high-risk phase.

2) This is trickier. While it’s tempting to assume data per shard will increase alongside Wright’s, Moore’s and Nielsen’s laws, in reality we have seen Ethereum gas limit increases follow a linear trend (R2 = 0.925) in its brief history thus far. Of course, gas limits and data availability are very different, and data can be scaled much less conservatively without worrying about things like compute-oriented DoS attacks. So, I’d expect this increase to be somewhere in the middle.

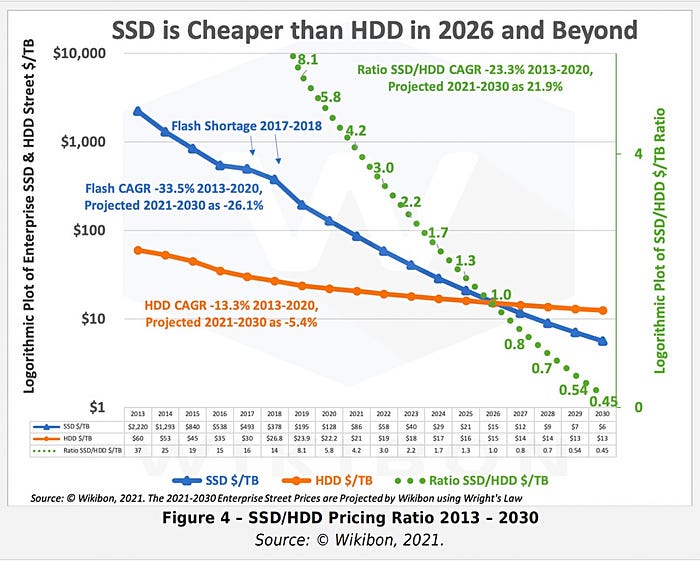

Nielsen’s Law calls for a ~50x increase in average internet bandwidth by 2030. For storage, we’re looking at ~20x increase. A linear trend, as Ethereum’s gas limit increments have thus far followed, is conservatively a ~7x increase. Considering all of this, I believe a ~10x increase in data per shard is a fair conservative estimate. Theoretically, it could be much higher — some time around the middle of the decade SSDs could become so cheap that the bottleneck becomes internet bandwidth, in which case we could scale as high as ~50x. But let’s consider the most conservative case of ~10x.

Given this, we’d expect each data shard to target 2.480 MB per block. Multiplied by 1,024, that’s 2.48 GB per block. Assuming a 12 second block time, that’s data availability of 0.206 GB/s, or 2.212 x 108 bytes per second. Given an ERC20 transfer will consume 16 bytes with a rollup, we’re looking at 13.82 million TPS.

Yes, that’s 13.82 million TPS. Of course, there will be much more complex transactions, but it’s fair to say we’ll be seeing multi-million TPS across the board. At this point, the bottleneck is surely at the VM and client level for rollups, and it’ll be interesting to see how they innovate so execution keeps up with Ethereum’s gargantuan data availability. We’ll likely need parallelized VMs running on GPUs to keep up, and perhaps even rollup-centric consensus mechanisms for sequencers.

It doesn’t end here, though. This is the most conservative scenario. In reality, there’ll be continuous innovation on better security, erasure coding, data availability sampling etc. that’d enable larger shards, better shards, and more shards. Not to mention, there’ll be additional scaling techniques built on top of rollups.

Update, 8th October 2021: Turns out the bottleneck for data shards is not disk but bandwidth — thanks to Justin Drake for correcting this. So, 50 million TPS is on the table.